Toviah Moldwin, Ph.D.

Computational neuroscientist, machine learning engineer, and data scientist.

I am Toviah Moldwin, a researcher at the intersection of artificial intelligence and neuroscience. My Ph.D. research focused on how plasticity in individual neurons in the brain relates to the algorithms used in modern machine learning, including perceptron learning in biophysical neurons, classification via dendritic nonlinearities, heterosynaptic plasticity, and calcium-based learning rules.

More recently, during my postdoctoral research, I have branched out into connectomics (including computer vision analysis of electron microscopy datasets), spiking neural network dynamics, and transformer interpretability.

I also have a blog, Dendwrite, sing and play guitar for Synfire Chain, and I am the founder and CEO of NotAZombie: Dating for People with Brains, a text-centric dating app.

If you are interested in hiring me for a full time or contract position, please reach out to me at the email address listed on my resume.

My research focuses on how single neurons and dendrites implement learning: calcium-based synaptic plasticity, dendritic computation, and brain-inspired machine learning.

Papers

The Calcitron: A Simple Neuron Model That Implements Many Learning Rules via the Calcium Control Hypothesis

Theoretical neuroscientists and machine learning researchers have proposed a variety of learning rules to enable artificial neural networks to effectively perform both supervised and unsupervised learning tasks. It is not always clear, however, how these theoretically-derived rules relate to biological mechanisms of plasticity in the brain, or how these different rules might be mechanistically implemented in different contexts and brain regions. This study shows that the calcium control hypothesis, which relates synaptic plasticity in the brain to the calcium concentration ([Ca2+]) in dendritic spines, can produce a diverse array of learning rules. We propose a simple, perceptron-like neuron model, the calcitron, that has four sources of [Ca2+]: local (following the activation of an excitatory synapse and confined to that synapse), heterosynaptic (resulting from the activity of other synapses), postsynaptic spike-dependent, and supervisor-dependent. We demonstrate that by modulating the plasticity thresholds and calcium influx from each calcium source, we can reproduce a wide range of learning and plasticity protocols, such as Hebbian and anti-Hebbian learning, frequency-dependent plasticity, and unsupervised recognition of frequently repeating input patterns. Moreover, by devising simple neural circuits to provide supervisory signals, we show how the calcitron can implement homeostatic plasticity, perceptron learning, and BTSP-inspired one-shot learning. Our study bridges the gap between theoretical learning algorithms and their biological counterparts, not only replicating established learning paradigms but also introducing novel rules.

A Generalized Framework for the Calcium Control Hypothesis Describes Weight-Dependent Synaptic Changes in Behavioral Time Scale Plasticity

The brain modifies synaptic strengths to store new information via long-term potentiation (LTP) and long-term depression (LTD). Evidence has mounted that long-term plasticity is controlled via concentrations of calcium ([Ca2+]) in postsynaptic spines. Several mathematical models describe this phenomenon, including those of Shouval, Bear, and Cooper (SBC) and Graupner and Brunel (GB). Here we suggest a generalized version of the SBC and GB models, based on a fixed point–learning rate (FPLR) framework, where the synaptic [Ca2+] specifies a fixed point toward which the synaptic weight approaches asymptotically at a [Ca2+]-dependent rate. The FPLR framework offers a straightforward phenomenological interpretation of calcium-based plasticity: the calcium concentration tells the synaptic weight where it is going and how fast it goes there. The framework can flexibly incorporate various experimental findings, including multiple regions of [Ca2+] where no plasticity occurs, or plasticity in cerebellar Purkinje cells where the directionality of calcium-based synaptic changes is thought to be reversed relative to cortical and hippocampal neurons. We also suggest a modeling approach that captures the dependency of late-phase plasticity stabilization on protein synthesis. We demonstrate that due to the asymptotic, saturating nature of synaptic changes in the FPLR rule, the result of frequency- and spike-timing–dependent plasticity protocols are weight-dependent. Finally, we show how the FPLR framework can explain plateau potential–induced place field formation in hippocampal CA1 neurons, also known as behavioral time scale plasticity (BTSP).

Asymmetric Voltage Attenuation in Dendrites Can Enable Hierarchical Heterosynaptic Plasticity

Long-term synaptic plasticity is mediated via cytosolic calcium concentrations ([Ca2+]). Using a synaptic model that implements calcium-based long-term plasticity via two sources of Ca2+—NMDA receptors and voltage-gated calcium channels (VGCCs)—we show in dendritic cable simulations that the interplay between these two calcium sources can result in a diverse array of heterosynaptic effects. When spatially clustered synaptic input produces a local NMDA spike, the resulting dendritic depolarization can activate VGCCs at nonactivated spines, resulting in heterosynaptic plasticity. NMDA spike activation at a given dendritic location will tend to depolarize dendritic regions that are located distally to the input site more than dendritic sites that are proximal to it. This asymmetry can produce a hierarchical effect in branching dendrites, where an NMDA spike at a proximal branch can induce heterosynaptic plasticity primarily at branches that are distal to it. We also explored how simultaneously activated synaptic clusters located at different dendritic locations synergistically affect the plasticity at the active synapses, as well as the heterosynaptic plasticity of an inactive synapse "sandwiched" between them. We conclude that the inherent electrical asymmetry of dendritic trees enables sophisticated schemes for spatially targeted supervision of heterosynaptic plasticity.

The gradient clusteron: A model neuron that learns to solve classification tasks via dendritic nonlinearities, structural plasticity, and gradient descent

Synaptic clustering on neuronal dendrites has been hypothesized to play an important role in implementing pattern recognition. Neighboring synapses on a dendritic branch can interact in a synergistic, cooperative manner via nonlinear voltage-dependent mechanisms, such as NMDA receptors. Inspired by the NMDA receptor, the single-branch clusteron learning algorithm takes advantage of location-dependent multiplicative nonlinearities to solve classification tasks by randomly shuffling the locations of "under-performing" synapses on a model dendrite during learning ("structural plasticity"), eventually resulting in synapses with correlated activity being placed next to each other on the dendrite. We propose an alternative model, the gradient clusteron, or G-clusteron, which uses an analytically-derived gradient descent rule where synapses are "attracted to" or "repelled from" each other in an input- and location-dependent manner. We demonstrate the classification ability of this algorithm by testing it on the MNIST handwritten digit dataset and show that, when using a softmax activation function, the accuracy of the G-clusteron on the all-versus-all MNIST task (~85%) approaches that of logistic regression (~93%). In addition to the location update rule, we also derive a learning rule for the synaptic weights of the G-clusteron ("functional plasticity") and show that a G-clusteron that utilizes the weight update rule can achieve ~89% accuracy on the MNIST task. We also show that a G-clusteron with both the weight and location update rules can learn to solve the XOR problem from arbitrary initial conditions.

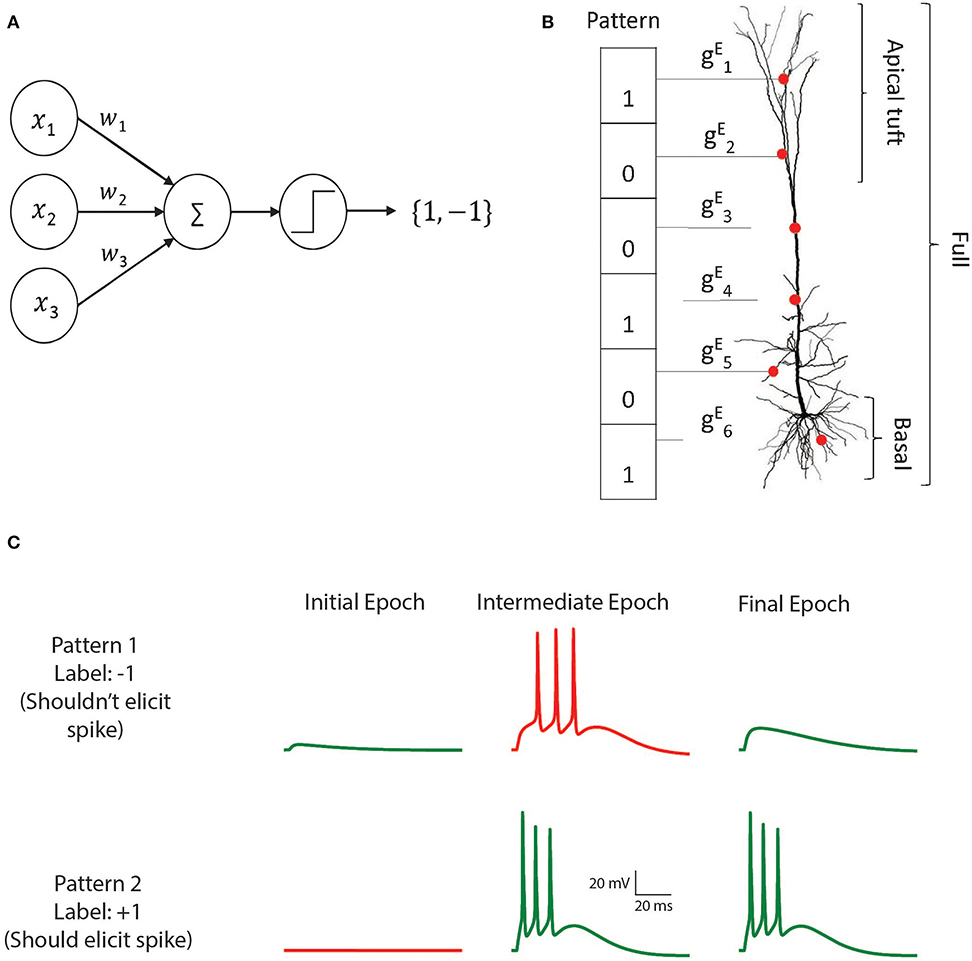

Perceptron Learning and Classification in a Modeled Cortical Pyramidal Cell

The perceptron learning algorithm and its multiple-layer extension, the backpropagation algorithm, are the foundations of the present-day machine learning revolution. However, these algorithms utilize a highly simplified mathematical abstraction of a neuron; it is not clear to what extent real biophysical neurons with morphologically-extended non-linear dendritic trees and conductance-based synapses can realize perceptron-like learning. Here we implemented the perceptron learning algorithm in a realistic biophysical model of a layer 5 cortical pyramidal cell with a full complement of non-linear dendritic channels. We tested this biophysical perceptron (BP) on a classification task, where it needed to correctly binarily classify 100, 1,000, or 2,000 patterns, and a generalization task, where it was required to discriminate between two "noisy" patterns. We show that the BP performs these tasks with an accuracy comparable to that of the original perceptron, though the classification capacity of the apical tuft is somewhat limited. We concluded that cortical pyramidal neurons can act as powerful classification devices.

Statistical Learning of Melodic Patterns Influences the Brain's Response to Wrong Notes

Statistical learning is a fundamental mechanism that allows the brain to automatically extract structure from the environment. We examined whether implicit statistical learning of melodic patterns influences what is held in auditory working memory and the brain's response to violations of that structure. Event-related potentials (ERPs) were recorded in response to tone sequences that contained statistically cohesive melodic patterns. We found that statistical learning of melodic structure influences the brain's response to "wrong" notes, demonstrating a direct link between statistical learning and neurophysiological measures of expectation and memory.

View all publications → Google Scholar